On March 25, 2026, the Model Context Protocol — Anthropic's open spec for connecting AI agents to external tools, databases, and APIs — crossed 97 million monthly SDK installs. Roughly 18 months earlier, MCP had been an experimental sidecar with about 2 million installs. The growth curve has now overtaken Kubernetes', which took nearly four years to reach comparable enterprise deployment density. By late April 2026, the ecosystem includes more than 5,800 community and enterprise servers, and every major AI platform — Claude, ChatGPT, Gemini, VS Code, Cursor, AWS, Cloudflare, Microsoft — ships MCP-compatible tooling as the default mechanism by which agents reach the outside world.

In short: a standards war that took the rest of the cloud industry a decade has been settled in eighteen months. If you write software that touches a database, an API, or a web app, MCP is now part of your reality whether you've adopted it deliberately or not.

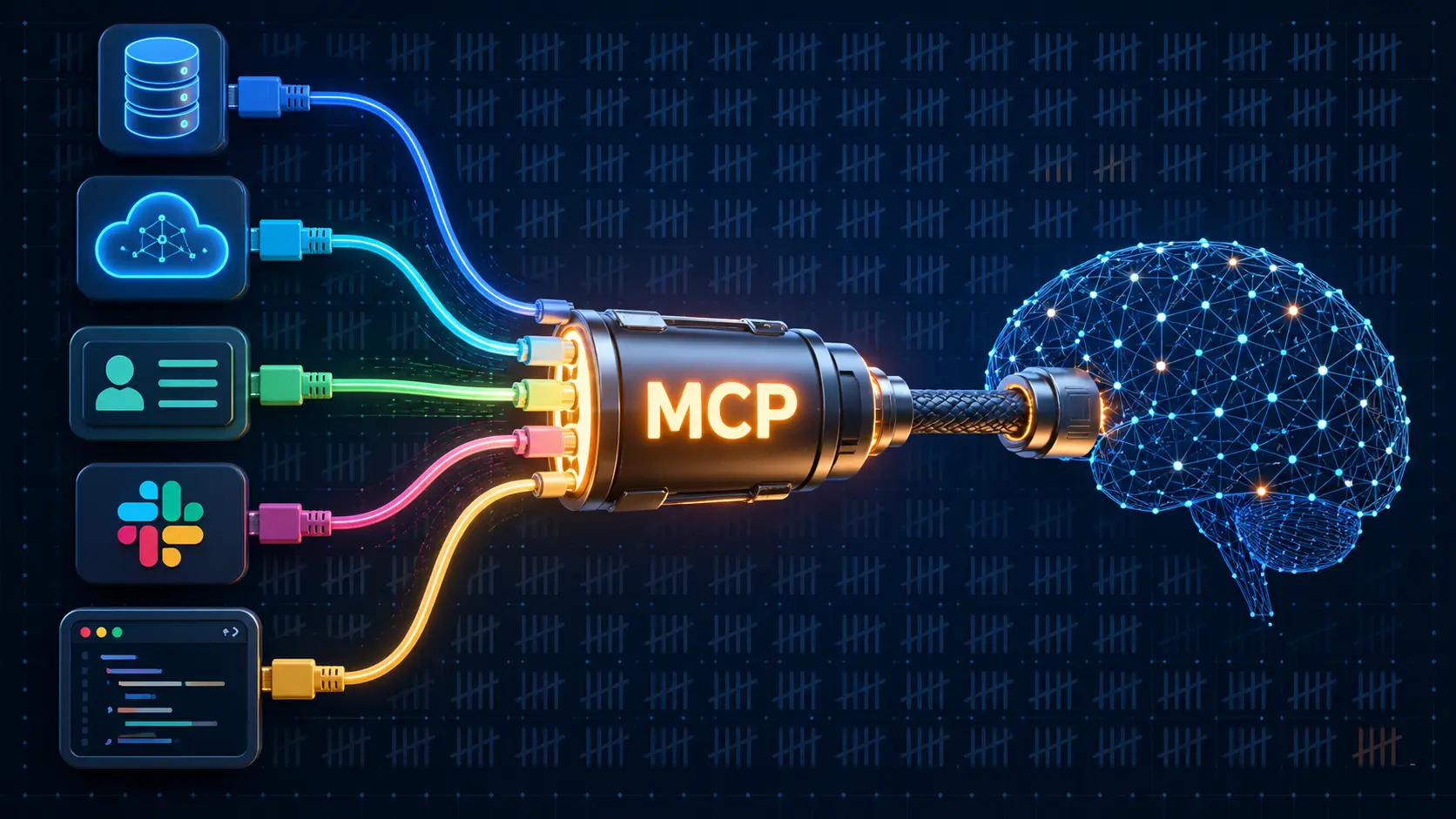

What an MCP server actually is, in one paragraph

An MCP server is a small process that exposes a structured, discoverable contract: "here are the tools I provide, here are the resources I can read, here are the prompts I expose, and here is the schema for each." An AI agent — running inside Claude Desktop, Cursor, ChatGPT's agent mode, or your own application — connects, discovers, and calls those tools without anyone hand-coding integration glue. The schema is JSON-RPC. The transport can be stdio, HTTP, or WebSocket. The trust boundary is explicit. If you've ever shipped a REST API or a database driver, you can ship an MCP server in an afternoon.

That last sentence is why the curve is shaped the way it is. MCP is trivial to author. It is also useful immediately. Those two properties together produce hockey-stick adoption.

Where the 5,800 servers actually live

The ecosystem has organized itself into recognizable buckets. Databases — Postgres, MySQL, BigQuery, Snowflake, MongoDB, Redis — were the first wave; an agent that can answer "what's our churn looking like this month?" without screen-scraping a dashboard is irresistible. CRM and productivity — Salesforce, HubSpot, Notion, Linear, Asana, Slack, Gmail — were the second. Cloud providers — AWS, GCP, Azure, Cloudflare — published official servers in Q1 2026. Dev tools — GitHub, GitLab, Sentry, Datadog — followed. E-commerce — Shopify, Stripe, BigCommerce — and analytics — GA4, Mixpanel, Amplitude — are the wave currently shipping into production.

The under-noticed implication is that for the first time in the history of enterprise software, the integration layer is converging on a single contract that the AI consumer (the agent) can discover and reason about at runtime, rather than the developer hand-coding a per-vendor integration at build time.

My take: three things this changes for working developers

First, the build-vs-buy calculus around internal tooling has flipped. Before MCP, "give the data team a self-serve interface for our warehouse" meant a frontend project, a permissions layer, a year of design reviews, and a roadmap. After MCP, it can mean: write a 200-line MCP server that exposes three carefully-scoped tools (run_query, list_tables, describe_schema), wire it into Claude or Cursor, and let the LLM be the UI. The team gets a chat interface in a week. The frontend roadmap reclaims a quarter. This trade is going to play out in ten thousand engineering orgs over the next twelve months.

Second, your security team's threat model is now incomplete if it doesn't include MCP. Every server is a tool surface that an agent can call with whatever credentials the server holds. The LMDeploy SSRF that got exploited 13 hours after disclosure earlier this week (CVE-2026-33626) is the early-warning shot for this category. Expect a tide of advisories through the rest of 2026 covering MCP servers that load arbitrary URLs, fan out cloud credentials, or expose internal admin endpoints. If you ship MCP servers, default-deny outbound, scope tools narrowly, audit the resource surface, and treat every fetch operation as a security boundary.

Third, the value of "owning your schema" just went up. The companies whose MCP servers are easiest to talk to will become the preferred substrate for agents — and the agents drive the developers. Stripe noticed this two years before everyone else. So did Cloudflare. The next wave of differentiation between competing SaaS products will be whose MCP server is the most expressive, the best-documented, and the safest to grant tool permissions to. Marketing was the moat in 2018. Developer experience was the moat in 2022. MCP server quality is the moat in 2026.

The longer arc

Standards wins are usually invisible until they're decisive. TCP/IP looked unglamorous in 1985. HTTP looked redundant in 1995. JSON looked trivial in 2005. MCP in early 2026 has the same flavor: a small, boring spec, adopted everywhere, settling a coordination problem that everyone knew existed but no one wanted to solve themselves. The protocol war is over.

What comes next is the harder, less visible work: governance, identity, capability scoping, audit, agent-to-agent authentication, billing for tool calls, and the slow construction of the Linux Foundation's Agentic AI Foundation as the neutral home for the next layer. None of that will trend on social media. All of it will determine whether 2027's agents are useful or merely terrifying.

For practitioners reading this on a Sunday: pick one internal data source — a Postgres replica, an internal admin API, a knowledge-base search endpoint — and write an MCP server for it this week. Spin it up locally. Connect it to the AI tool your team already pays for. See what your team starts asking it. The thing you'll learn from that two-hour exercise is going to outweigh the next quarter of "AI strategy" meetings.

The plumbing has been standardized. The interesting work happens on top of it.