If you have been paying attention to GitHub's trending page in early 2026, one name has been almost impossible to ignore: OpenClaw. Affectionately nicknamed "Molty," this open-source personal AI assistant exploded from relative obscurity to over 100,000 GitHub stars within its first week of going viral, and it has continued to climb ever since. Created by Peter Steinberger, the founder of PSPDFKit, OpenClaw represents a fundamentally different approach to how we interact with AI in our daily lives.

What Makes OpenClaw Different

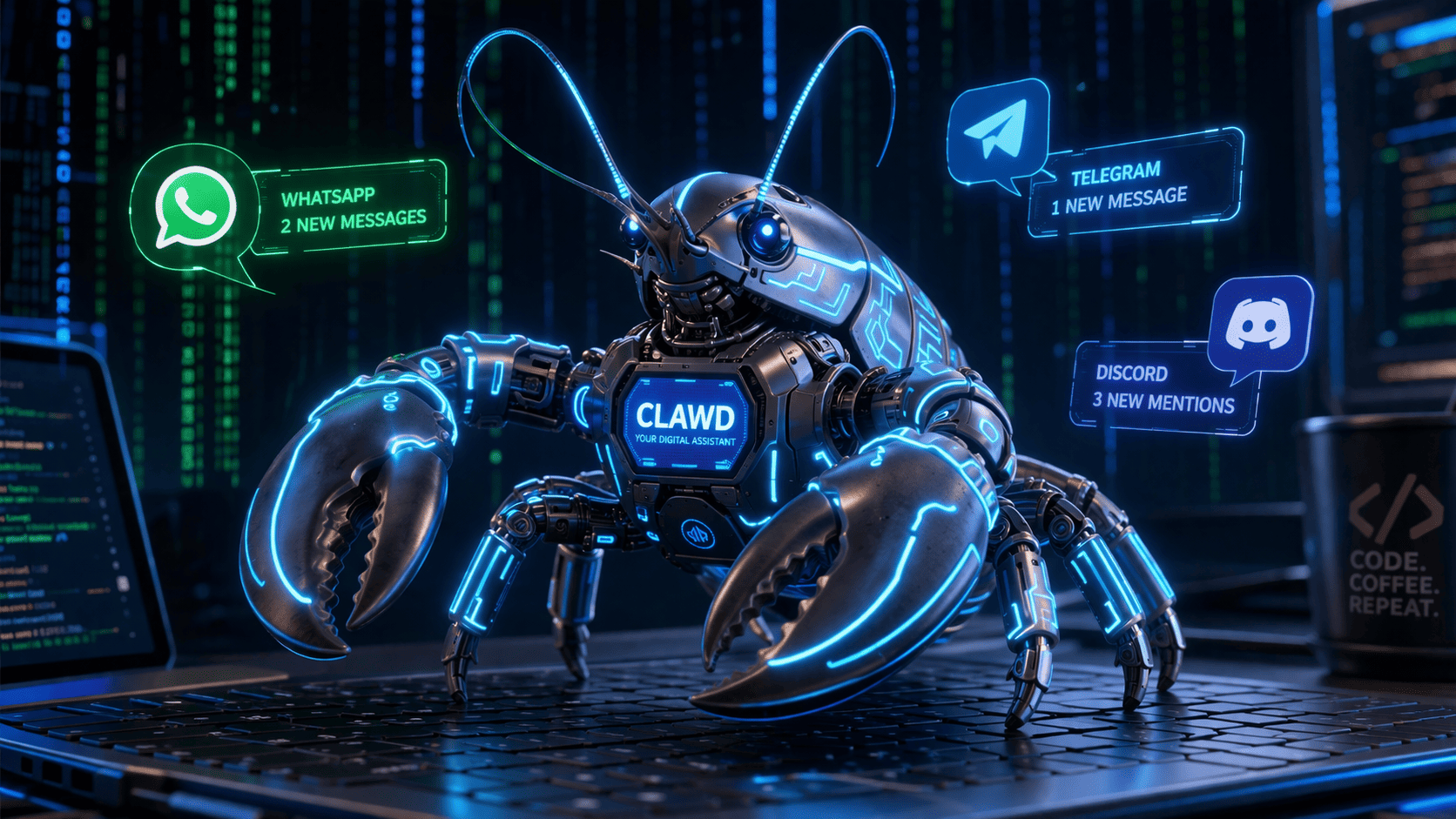

Unlike cloud-dependent AI assistants that funnel your conversations through corporate servers, OpenClaw runs entirely on your own devices. At its core, it is a local gateway process — a background daemon running on your machine or a VPS — that connects large language models to the messaging platforms you already use. WhatsApp, Telegram, Slack, Discord, Signal, iMessage, LINE, Microsoft Teams, Matrix, and many more are all supported out of the box. You do not need to learn a new interface or switch to yet another app. You simply talk to your AI assistant wherever you are already talking to people.

This architecture has profound implications for privacy. Your data stays on your device. Your conversations are not harvested for training. Your personal context remains personal. In an era where data privacy concerns continue to dominate headlines, this local-first approach is not just a technical choice — it is a statement about how AI should respect its users.

The Developer Ecosystem Angle

From a developer perspective, OpenClaw is fascinating because of its extensibility. The project supports over 50 integrations, and because it is open source, developers can add new ones freely. The LLM-powered agent runtime can take real actions in the world — scheduling meetings, sending messages, managing tasks, and querying databases — all through natural language commands sent via your favorite chat app.

For PHP and Flutter developers in particular, OpenClaw's architecture provides an excellent case study in building local-first, privacy-respecting applications. The gateway pattern it employs — receiving messages from multiple platforms, routing them through an intelligent agent layer, and dispatching actions back — is applicable to many other domains. Flutter developers building cross-platform apps can draw inspiration from how OpenClaw achieves platform-agnostic communication, while PHP developers maintaining backend services can study its approach to webhook handling and real-time message routing.

Why This Matters Beyond the Star Count

The explosive growth of OpenClaw signals something deeper than just another popular repository. It reflects a growing fatigue with centralized AI platforms and a hunger for tools that put users in control. The fact that a self-hosted AI assistant — something that requires more technical effort than simply opening a chat window — could achieve this level of adoption tells us that developers and technically-minded users are willing to invest effort for sovereignty over their AI interactions.

It also highlights the maturation of the open-source AI ecosystem. Two years ago, running a capable AI assistant locally was impractical for most people. Today, with models like Llama, Mistral, and others available at various sizes, the performance gap between local and cloud models has narrowed significantly. OpenClaw sits at the intersection of these trends, making local AI not just possible but genuinely convenient.

My Perspective

I believe OpenClaw represents the beginning of a fundamental shift in how personal AI assistants will work. The current dominant model — where your AI lives inside a company's cloud and you access it through their interface — is increasingly feeling like the AOL era of the internet. OpenClaw points toward a future where your AI is truly yours: running on your hardware, connected to your tools, and loyal only to you.

The challenge ahead is not technical but social. Can open-source personal AI assistants maintain quality and safety without centralized oversight? Can the community build guardrails that prevent misuse without sacrificing the flexibility that makes these tools powerful? These are the questions that will define the next chapter of personal AI, and projects like OpenClaw are forcing us to confront them now rather than later.

For developers, the message is clear: learn to build local-first AI applications. The tools are ready, the demand is real, and the community is growing faster than anyone predicted.